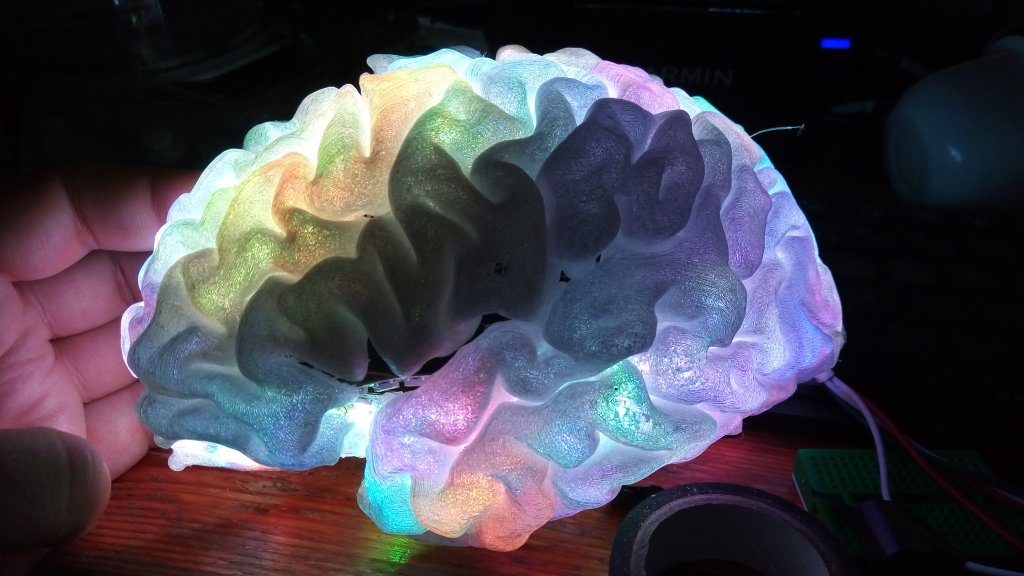

PlasticBrain is a display device using a 3D realistic model of the brain.

It can display functional activity (fMRI, EEG, MEG, NIR, PET…) or any other information (connectivity, anatomical atlas), to visualize data, teach or for biofeedback-therapy.

It is built from a 3D print of a human brain segmented from an MRI, some RGB LED strips and an Arduino UNO to drive the display.

LEDs are either directly put inside the hemisphere or outside on a matrix (with the light being guided inside the model by optical fibers).

It is distributed freely (software is distributed with the GNU GPLv3 license, and the harware design is distributed under the conditions of the CERN Open Hardware license).

I founded a small company to develop the hardware and software aspects and to sell the device. Integration with existing data acquisition systems (in neuroscience research, clinical, or DiY systems) can be done on-demand. Contact : manik <dot> bhattacharjee <at> free.fr

3D printing

From MRI to 3D printing :

- Acquisition of a 3D T1-weighted MRI with a resolution of around 1 mm isotropic.

- Segmentation and meshes of cortical hemispheres with BrainVisa

- Decimate and smooth the meshes, either manually with Blender, or with BrainVisa tools

- Optional : generate a mesh with tunnels where electrodes were implanted in a patient’s brain (SEEG or DBS electrodes) with IntrAnat software.

- Export the meshes as .obj files with Anatomist (the viewer which comes bundled with BrainVisa)

- Optional : Split hemispheres in two parts to be assembled after LED insertion : choose a cutting plane in Blender, use a Boolean modifier to cut the mesh, duplicate and do it again for the other side. Apply the modifiers..

- Export the meshes as STL files

- Depending on the 3D printer, load the STL file in the appropriate software

- Choose no fill-in for the print (to have room for the LEDs) and use support structures for overhanging issues.

- Our tests:

- With a Ultimaker2 FDM printer and transparent PLA from Colorfabb : multiple print failures due to small deposits of plastic on a previous layer being pushed by the printer head and moving the piece creating offsets of some layers during the 11 hours of printing. We had one successful print.

- With a Zortrax M200 FDM printer and Z-glass material : one trial at Fablab de la casemate de Grenoble which failed with the same layer offset issue as the Ultimaker. The fablab was destroyed in a fire shortly after so we could not try again.

- With a Unisys UPrint 3 printer we were able to print one full brain in 4 parts (two split hemisphere in a translucent white plastic. The print was extremely long (more than 45 hours) but the quality is nice and the dual printing head prints the supports with a material that can be dissolved in a chemical bath.

- We would like to try a UV resin-based printer (e.g. Formlabs Forms, Wanhao duplicator, Zortax Inkspire). This should allow good translucency and faster printing, but still with support material to clean.

- We would like to try laser-based sintering but it is much more expensive. Some materials can be translucent, and any shape can be created without support as the powder itself is the support. Cons: it is much more expensive that other techniques.

Build

LEDs

Use individually adressable RGB LEDs (e.g. WS2812B) strip. At first we wanted to get them as dense as possible for better spatial resolution (now 144 LEDs per meter) but the light in the prototype is diffused in a large area so less dense LED strips work as well. According to the scale of the model we need between two and three led strips per hemisphere but for the prototype we used one per hemisphere.

Warning LEDs can get relly hot, beware of overheating !

- Glueing LEDs under the hemisphere surface

- Roll the LED strip around inside the hemisphere and place the LEDs carefully on the surface facing outwards to avoid breaking the connections between the LEDs. Cover the surface as well as possible. This would benefit from a smoother internal surface on the printed cortex.

- Use a hot glue gun with translucent glue to attach the LED strip to the surface

- Use the three cables from the LED strip to power and pilot it : plug to a 5V power supply that can provide enough current on the black and red cables (beware of getting the polarity right), plug the green cable to an output PIN from the Arduino.

- Between the power cables + and -, solder a capacitor to smooth the current peaks caused by the LEDs switching on and off, because it can prevent the color signal from the Arduino to work. Do not forget to put a cable between the ground of the Arduino/USB and the ground of the power supply or you make destroy the Arduino and the LED strips.

- LED matrix and optical fibers

- Connect a WS2812b LED matrix to the Arduino

- 3D print a support to attach optical fibers on top of the LEDs

- If possible use collimation optics to avoid losing to much light (tests in progress)

- Tie fibers together to match the shape of an SEEG electrode, with a fiber end where each electrode contact should be.

- Place the fiber-optic mock electrodes in the tunnels printed for them in the brain model.

- Build a small box with a laser cutter to house the electronics

Software:

- Code repositories (see below for details) : Intranat with partial support for plasticBrain, HUG hackathon 2018, Brainhack Geneva 2019 hackathon

- locateLEDS : locate the LEDs in the model : ongoing development

- Integration with Brainvisa and IntrAnat to display atlases and functional data

- Arduino code : setup code (lights LEDs one by one), christmas tree (beautiful colors), data receiver that displays colors received by the Arduino (using the USB connection) available from the repositories

Next steps

- Coupling with OpenBCI to display the data recorded with the OpenBCI EEG headset – this might already work using the 2019 hackathon code and an LSL streamer for OpenBCI.

- Coupling with OpenVibe : Display data read from files or from realtime acquisitions

- Coupling with BrainTV : display gamma-band activity recorded from implanted SEEG electrodes in realtime

- Coupling with Bitalino to display heart actity or breathing as colors on the device.

- Coupling with a music player to display nice colors synchronized with music

Project history

In 2014/2015 Pierre Deman bought an Arduino to synchronize a clinical SEEG recording system with a stimulation software in Grenoble, France.

As we had this component available, we realized that because 3D printing and DIY electronics were now available to the general public, we could build an old idea of mine : display on a realistic 3D brain its activity, realtime or afterwards (for example to replay an epileptic seizure in slow-motion), or use it to display anatomical regions.

In 2016 we had a 3D printed hemisphere with LED strips and we could control the display from a computer. We modified IntrAnat software to be able to drive PlasticBrain but it still needed some work.

In 2018 having moved to Geneva, we proposed the project to the 2018 HUG Hackathon (Geneva University Hospitals) and were joined by Michel Tran Ngoc and Vincent Rochas. We used an EEG headset streaming the data in realtime with LabStreamingLayer to another computer running IntrAnat. We received the data, and filtered it in realtime. We needed to locate the EEG electrodes in relation to the brain so we used a 3D scanning Android app to build a mesh of my head with the pictures of the EEG headset as a texture. We wanted to register it to the MRI-extracted head surface and automatically detect the electrodes from the texture. Then using Cartool to compute the inverse problem allowed to reconstruct brain activity on my cortical surface. We also wanted to display realtime activity in IntrAnat, then locate the LEDs in the cortical surface and map the activity of the brain onto them. We did not achieve the full prototype due to the limited time (36 hours) but multiple parts were working. Final Presentation and source code on Github are available.

In 2019 I partipated with PlasticBrain to the 2019 Geneva Brainhack hackathon. New participants joined me during 36 hours : Victor Ferat, Jelena Brankovic, Gaetan Davout, Elif Naz Gecer, Jorge Morales and Italo Fernandes. This time we had a less ambitious goal: using a standard brain model (and not my MRI used to print the brain) and a standard EEG electrodes layout (no measure of the real position of the electrodes) we solved the inverse problem with Cartool, projecting activity from the EEG to cortical sources. We built the 2nd hemisphere to have a complete brain and using the arduino we lit one by one the 192 LEDs, clicking on its estimated location in Cartool on the brain model. A script was then used to compute a matrix projecting source activity to LED values and the Arduino applied a colormap. The signal was filtered and the power in the alpha band was computed as it is the easiest way to show a change in brain activity : closing the eyes increases alpha waves power whereas opening then lowers it. We had a working prototype at the end of the hackathon (see video higher in this page). The code is available on github.

About us

Manik Bhattacharjee – LinkedIn

Pierre Deman

Participants to the 2018 HUG Hackathon : Manik Bhattacharjee, Pierre Deman, Michel Tran Ngoc, Vincent Rochas

Participants to the 2019 Brainhack Geneva Hackathon : Manik Bhattacharjee, Victor Ferat, Jelena Brankovic, Gaetan Davout, Elif Naz Gecer, Jorge Morales, Italo Fernandes